The Greatest Counter-Strike Teams of All-Time

In an era of shelter-in-place, we’ve seen the temporary shutdown of many sports leagues. The biggest sporting news of the day seems to revolve around discussions of greatness. These events led me to e-Sports, specifically Counter-Strike, and interest in answering who were the greatest Counter-Strike teams of all-time?

Counter-Strike seems more popular than ever, with over a million concurrent players and large prize pools. The scene has its own news and betting odds are available from major betting providers. It has all the things a traditional sports fan might want, even though the traditional sports and eSports communities seem completely disparate.

So I decided to take a leaf from Bill “86’ Celtics” Simmons’ book and ask… Who are the ‘11 Barcelona, the ‘85 Oilers, the ‘39 Yankees, the ‘07 Patriots, the ‘17 Warriors, and the ‘02 UConn Huskies of Counter-Strike. If you don’t agree with those as the GOATs, well, that’s exactly why this is fun.

Such breakdowns exist for many sports, but I couldn’t find a single list for Counter-Strike. There are annual lists of player of the year, and regular updates for current world rankings but what about the all time greats? Top 50 Players in CS History (2014) has 9M+ YouTube views. But a list of the greatest teams? I guess I have to make my own.

We’ll follow an approach that FiveThirtyEight took for baseball, basketball, and football. This consists of building an imperfect quantitative rating, and skip having to actually watch thousands of hours of gameplay to make a more refined decision.

How the ratings work

We’ll be building a basic Elo rating system. I’ve written about the technical details before, so refer to those for more information. In layman’s terms: everyone’s rating is represented by a single number. When a team beats another, the winner takes points from the loser; the more unlikely the victory, the more points get exchanged. In absurdly technical jargon, Elo is an online approximation to logistic regression using conservative proportional control; the conservation implies that the regression version of this task is solving for the Rayleigh quotient. There, glad I got that out of the way.

So how to do we get all the matches? We used an export of Liquipedia, which has Counter-Strike games going back to 2000. Each team maintains a seperate rating for each game (Source, 1.6, GO). The dataset is incomplete & not uniformly sampled (more recent tournaments are better documented), but I was able to parse about 100,000 matchups, which should be good enough to understand the landscape. All editions of Counter-Strike are combined and treated equally.

I setup a general Elo algorithm with several free parameters. Then I optimized those parameters. Specifically, I maximized the log-likelihood of future match predictions. It’s important think about how different events and cases are handled. The scoring function was \( \sum_i T_i \log(p_i) (r_A + r_B) \) where \(p_i\) is the predicted probability of the true winner, \(T_i\) was a multiplier to give higher tier and LAN events higher weight. \(r_A\) and \(r_B\) are the ratings of the teams, to focus on correctly predicting matchups between top teams.

Some examples of the settings: if teams had a 50% chance of winning an S-Tier LAN and won 2-1, they would swap 8 points, 80%/20% winners would see 2 points (if 80% winner won) or 20 points (if 20% winner won). If the the win was 2-1, it’d be 6, 1 and 17 points in the previous 3 examples. This formula was \( p_{l}^2 ( (w)^2 A_{\text{Tier},\text{LAN}} + B_{\text{Tier},\text{LAN}})\) with \( p_{l}\) being the match loser’s predicted winning probability, \( w\) being the % of maps won, and \( A\) \( B\) being constants with different values for each the combinations of On/Offline and S/A-Tier.

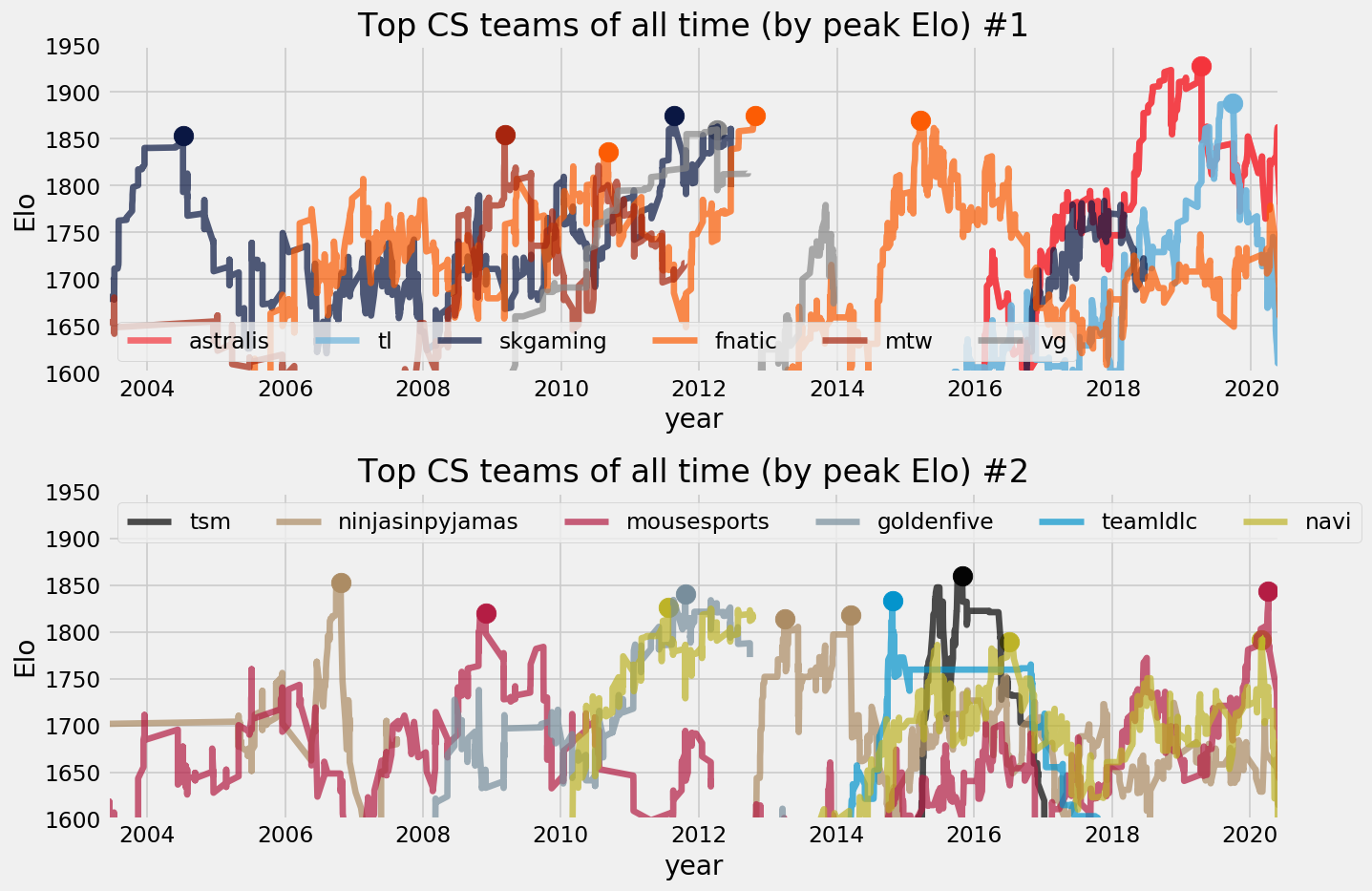

The highest Elo peaks by Counter-Strike Teams

Without further ado, here’s the top 20 teams by peak Elo

| Team | Date | Elo | Members |

|---|---|---|---|

| Astralis | 2019-04-12 | 1928 | device dupreeh Xyp9x gla1ve Magisk |

| Team Liquid | 2019-09-26 | 1888 | nitr0 EliGE Twistzz NAF Stewie2K |

| SK Gaming | 2011-08-19 | 1875 | GeT_RiGhT f0rest Delpan face RobbaN |

| fnatic | 2012-10-21 | 1875 | trace Friis karrigan FYRR73 MODDII |

| fnatic | 2015-03-15 | 1870 | flusha JW pronax olofmeister KRIMZ |

| TeamSoloMid | 2015-10-29 | 1860 | cajunb device Xyp9x karrigan dupreeh |

| VeryGames | 2012-04-05 | 1860 | SmithZz NBK shoxie RpK Ex6TenZ |

| mTw | 2009-03-07 | 1854 | ave mJe zonic Sunde whimp |

| NiP | 2006-10-21 | 1853 | SpawN RobbaN walle ins zet |

| SK Gaming | 2004-07-09 | 1853 | HeatoN Potti fisker SpawN ahl |

| mouz | 2020-04-07 | 1843 | chrisJ ropz karrigan woxic frozen |

| Golden Five | 2011-12-11 | 1840 | TaZ kuben Loord pasha NEO |

| fnatic | 2010-08-14 | 1836 | Gux cArn dsn f0rest Get_RiGhT |

| TeamLDLC | 2014-10-24 | 1834 | shox Happy NBK kioShiMa SmithZz |

| Natus Vincere | 2011-07-23 | 1826 | starix ceh9 Zeus markeloff Edward |

| FaZe | 2018-02-23 | 1821 | rain karrigan NiKo GuardiaN olofmeister |

| mouz | 2008-11-28 | 1821 | cyx Kapio Tixo gobb gore |

| NiP | 2014-03-15 | 1818 | Xizt GeT_RiGhT Fifflaren friberg f0rest |

| Team Envy | 2015-09-10 | 1814 | Happy NBK kioShiMa kennyS apEX |

| Natus Vincere | 2020-03-01 | 1791 | flamie s1mple electronic Boombl4 Perfecto |

| SK Gaming | 2008-10-23 | 1790 | allen Tentpole walle RobbaN zet |

I merge teams with similar rosters in adjacent years, so 2018 Astralis (1924) isn’t 2nd on the list but grouped with 2019 Astralis. Likewise 2013 NiP (1814) is grouped with their 2014 year. In general, Scandinavian teams dominate the top 20, and only one American roster made the list.

Some of these peaks last several years. Some have high roster overlap, as SoloMid in 2015 looks a lot like Astralis in 2019. Sometimes cores span multiple teams, as GeT_RiGhT and f0rest dominate from 2009 to 2014 being on fnatic, SK and NiP. karrigan shows up four times. NAVI, SK, mouz, NiP and fnatic climb to the top several times with different rosters.

I particularly loved the Golden Five. I grouped them across several clan tags (AGAiN, ESC, MYM). They climb fast particularly as Frag eXecutors, formed on Feb 2010 and lasting only 20 months. That short span ends with 1st place in WEG 2011 (2:1 over SK) in August and 1st place in SEC 2011 (2:0 over NAVI) in October. A few months later (as ESC), they win WCG (over SK) and then IEM IV (over NAVI). Those SK and NAVI rosters both made the list above.

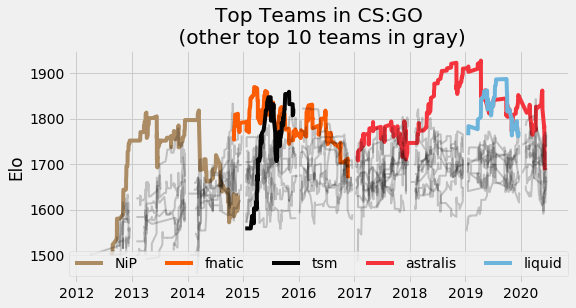

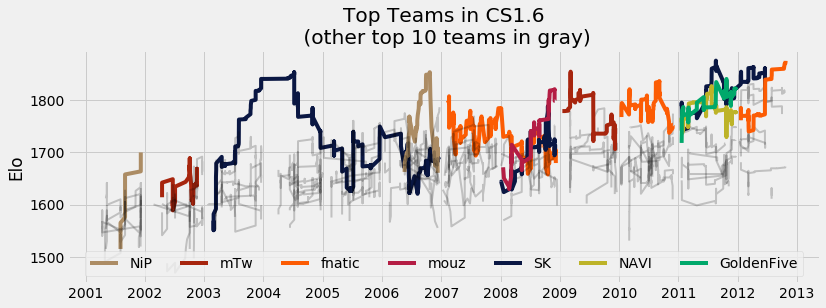

Each of these teams deserves at least a few words, some analysis, and maybe even a highlight real. Sadly, I don’t have the time right now (but can collaborate, contact me!). But I will make some hasty graphs

These teams peak, by definition, in tournaments that they lost. A peak Elo is when that team was the biggest favorite. Being the peak, losses must surely have followed.

Top Teams in June 2020

At the time of writing, the top 12 teams by Elo are

| Team | Elo | Team | Elo | |

|---|---|---|---|---|

| G2 | 1770 | NiP | 1678 | |

| Vitality | 1738 | Lions | 1673 | |

| FaZe | 1710 | ENCE | 1667 | |

| FURIA | 1705 | NAVI | 1659 | |

| Astralis | 1687 | BIG | 1650 | |

| fnatic | 1683 | Liquid | 1645 |

This largely overlaps with current HLTV Rankings, which is a minor success.

The 5 Best Counter-Strike Teams of Every Year

To find a few more gems, I’ll list the top 5 teams in every year. These use a quadratic mean of the team’s Elo score across all of their Elo updates that year. The choice of quadratic mean should bias these towards their larger results.

- 2000 clanz (1564), dop (1546), eyeballers (1541), soa (1529), mtw (1521)

- 2001 nip (1620), soa (1590), sk (1585), mtw (1573), lnd (1572)

- 2002 mtw (1638), eolithic (1627), mouz (1599), sk (1585), m19 (1571)

- 2003 sk (1725), eolithic (1677), team9 (1662), mtw (1659), team3d (1639)

- 2004 sk (1802), eyeballers (1702), titans (1655), mouz (1651), mibr (1650)

- 2005 mouz (1704), nip (1701), sk (1680), noa (1668), complexity (1654)

- 2006 nip (1766), fnatic (1714), complexity (1714), wnv (1688), sk (1677)

- 2007 fnatic (1746), pentagram (1717), noa (1700), sk (1697), mibr (1689)

- 2008 mouz (1738), sk (1714), fnatic (1709), mtw (1708), mibr (1686)

- 2009 mtw (1775), fnatic (1737), mouz (1729), sk (1716), mibr (1708)

- 2010 fnatic (1787), vg (1745), sk (1743), mtw (1739), eg (1702)

- 2011 vg (1813), sk (1812), goldenfive (1796), navi (1779), mtw (1732)

- 2012 sk (1845), vg (1832), goldenfive (1809), navi (1808), fnatic (1802)

- 2013 nip (1764), vg (1692), fnatic (1607), cphwolves (1600), vp (1592)

- 2014 ldlc (1704), fnatic (1697), vp (1673), nip (1672), navi (1652)

- 2015 fnatic (1811), tsm (1759), envy (1727), navi (1725), vp (1707)

- 2016 fnatic (1775), tsm (1753), ldlc (1747), navi (1741), lg (1710)

- 2017 astralis (1756), sk (1738), faze (1734), gambit (1689), g2 (1671)

- 2018 astralis (1856), faze (1766), sk (1733), tl (1714), mouz (1706)

- 2019 astralis (1841), tl (1824), nrg (1721), ence (1718), mouz (1710)

Here we see Norwegian teams (eolithic, noa), Russian teams (m19, vp), North American teams (3D, complexity, EG), a South American team (mibr) and a Chinese team (wnv). These teams even have multiple top-5 appearances and so they probably qualify for some consideration as “the best Counter-Strike teams ever”. SK (14 times), fnatic (10 times), Mouz (7 times) seem like constants in Counter-Strike. MiBR makes the list 4 times in 5 years. NAVI makes the list 5 times in 6 years. NiP has three number one finished, 5 years apart. Astralis from ‘17 to ‘19 is the only 3 year span in first place. SK (‘03 to ‘04) and fnatic (‘15 to ‘16) manage two year spans. VeryGames is playing CS:Source and leads from ‘11 to ‘13.

To gain points in Elo, it requires directly beating good teams on a regular basis. If the European scene has more players, more teams and more competitions, then their best teams would obtain higher Elo scores than the best North American teams playing the same number of games. Using something like logistic regression over batches of games may provided a better rating system than Elo, as it wouldn’t suffer from this failure. HLTV’s system of assigning points to winners of tournaments also avoids this problem.

Conclusion

I’d probably rank the greatest Counter-Strike teams of all time as

- 2019 Astralis

- 2013 Ninjas in Pyjamas

- 2003 SK Gaming

- 2015 fnatic

- 2011 Golden Five

2019 Astralis has the highest peak Elo and the highest annual QM Elo. Their longevity is largely unrivaled in a crowded field. They’re a shoe-in for the number 1 position.

2013 NiP is the runner up. Since ratings are reset for each game, this Elo system believes NiP is beating up on fairly weak teams. However, their aQM Elo is 150 points above second place, the largest such gap. They open the era of CS:GO and the core of f0rest and GeT_RiGhT are on the number 1 team during 2010 (fnatic), 2011-12 (sk) and 2013 (nip) seasons. In an Elo system where ratings are not reset, 2012 NiP’s peak is 2nd only to Astralis (and just narrowly ahead of 2015 fnatic and 2019 Liquid).

For the third slot, 2003 SK seems like a worthwhile team. Winning 2 CPLs and WCG earns them 1% of all CS1.6 prize money ever awarded, in four months. Their 100 point aQM Elo lead over the second place team is second to only the early CS:GO NiP team.

2015 fnatic deserves the fourth slot. They have a two year span in first place and are 70 points ahead of the competition in aQM for that year. They narrowly miss a 3 year streak for number one and, nestled between NiP, TSM and Astralis, have an important place in the history of the game.

The last slot comes down to the end of CS1.6 in late 2011 and early 2012. There are four teams with plausible claims: SK Gaming, NAVI, fnatic and the Golden Five. SK Gaming has the highest aQM in 2011 and 2012; their Elo peak is 3rd all time. NAVI is no slouch themselves, winning IEM V and knocking SK out of Dreamhack in Nov ‘11 and SEC in Oct ‘11. fnatic is the “final” team of CS1.6, taking the fourth highest peak Elo of all time right when CS:GO comes out by taking 1st place in CS1.6’s final 6 events, beating NAVI 3 times in the finals (and SK once). But my pick for fifth is the 2011 Golden Five (as Frag eXecutors & ESC). They have back-to-back, head-to-head finals wins over those NAVI & SK rosters. Twice! They win WEG+WCG over SK and SEC+IEM over NAVI.

The rest of the top 10 seems harder to fill out but good candidates include several CS1.6 teams (2006 NiP, 2009 mTw, 2008 mouz, 2010 fnatic, 2008 SK) and CSGO teams (2019 Liquid, 2015 LDLC, 2015 Virtus.pro, 2018 FaZe). As honorable mentions, VeryGames is the undisputed champion of CS:Source while TYLOO holds the crown for CS:Online. VG is so dominant at Source that they obtain the 2011 peak Elo, even though the field is smaller (and hence Elo harder to gain).

If you thought this was fun and have your own top 10 list, please make it and share!

Article version 1.1 notes

Getting the “right” Elo algorithm for CS was not easy, even when I ignored roster turnover, gameplay changes and map selection. Compared to NBA or NFL analysis, the schedule in eSports is chaos. There are thousands of teams playing dozens of events. Some teams play weekly events while others’ presence is only felt every few months at major competitions. These events vary in prestige, prize pool and turnout. While similar issues are present in chess (where Elo was initially designed), the online vs offline nature of eSports complicates things further.

The first version of this article only followed S-Tier and A-Tier events and ignored which game was being played. After getting some feedback, I reset team ratings after N weeks of inactivity. Then I split ratings between 1.6, Source and GO. Then I made a smooth version of “regression to the mean” based on when a team last played. Then I added all tiers of competitions, increasing my dataset tenfold. By then, my scoring function got much more complicated. How do I weigh all the events appropriately? I added more variations to my Elo algorithm, allowing it to take into account rating and score differences. I added potential for non-linear behavior at several steps. Traditional Elo has 1 parameter: \(K\). My algorithm had 30. In every variation, certain teams would always do well– 2003 SK, 2006 NiP, ‘08 fnatic, ‘09 mtw, ‘11/’12 SK/ESC, VG in CS:S, 2013 NiP, ‘15 fnatic, ‘18 Astralis, ‘19 Liquid are always notable and at the top. The micro-narratives would change: is ‘13 NiP an all-time great or just facing weak competition? Is the peak of ‘18 Astralis better than ‘15 fnatic? Some parameters lead to teams rising/falling more rapidly, while others are more slow-and-steady. All the variations had roughly similar accuracy in predicting games (about 65% of top tier games, similar to basic methods in the NBA).

Following ideas behind valuing information, I decided to tear down all my work and start up again. Events are corrected for their frequency and LAN is valued 3x more than online, S tier 3x more than A tier and A tier 3x more than otherwise. I threw out my old settings and started again. I found new ones by maximizing the likelihood of my model, ensuring rating differences are as meaningful as possible. After 48 hours of multicore simulation and 50,000 configurations, I had a good answer and updated this article to reflect it. It’s not perfect but it’ll have to do for now.